Berkeley Agentic AI + Advanced LLM Agents

Learn agents by the problems they solve, not by memorizing buzzwords.

Two public Berkeley courses converted into a problem-first study system: each video becomes a focused page with the core failure mode, solution pattern, visual model, practice drills, and frontier references.

Mental model

Agent capability stack

Click any video page when a node feels weak.

UC Berkeley CS294/194-196

Agentic AI MOOC Fall 2025

Agentic AI safety and security

Agents can take actions, so prompt injection, tool misuse, memory poisoning, and privilege escalation become operational risks.

Autonomous embodied agents

Embodied agents must act in environments where observations are delayed, partial, and physically grounded.

LLM-era multi-agent systems

Classic multi-agent systems assumed explicit protocols; LLM agents communicate in flexible language but become harder to verify.

Deploying real-world agents

Real users expose edge cases that scripted demos never touch.

AI agents for science

Scientific discovery is a pipeline of literature search, hypothesis generation, experiment design, analysis, and iteration.

Benchmark noise and evaluation

A model looks better or worse because the benchmark is noisy, not because the agent improved.

Multi-agent AI

One model context is too narrow for broad, parallel, open-ended work.

Training agentic models

Base and chat models know language, but agentic work needs persistence, tool discipline, and recovery from failure.

Post-training verifiable agents

Agents need training signals for long tasks, but many useful tasks do not have obvious step-by-step labels.

Agent system design evolution

Agent prototypes work in demos but fail when state, tools, latency, retries, and deployment versions interact.

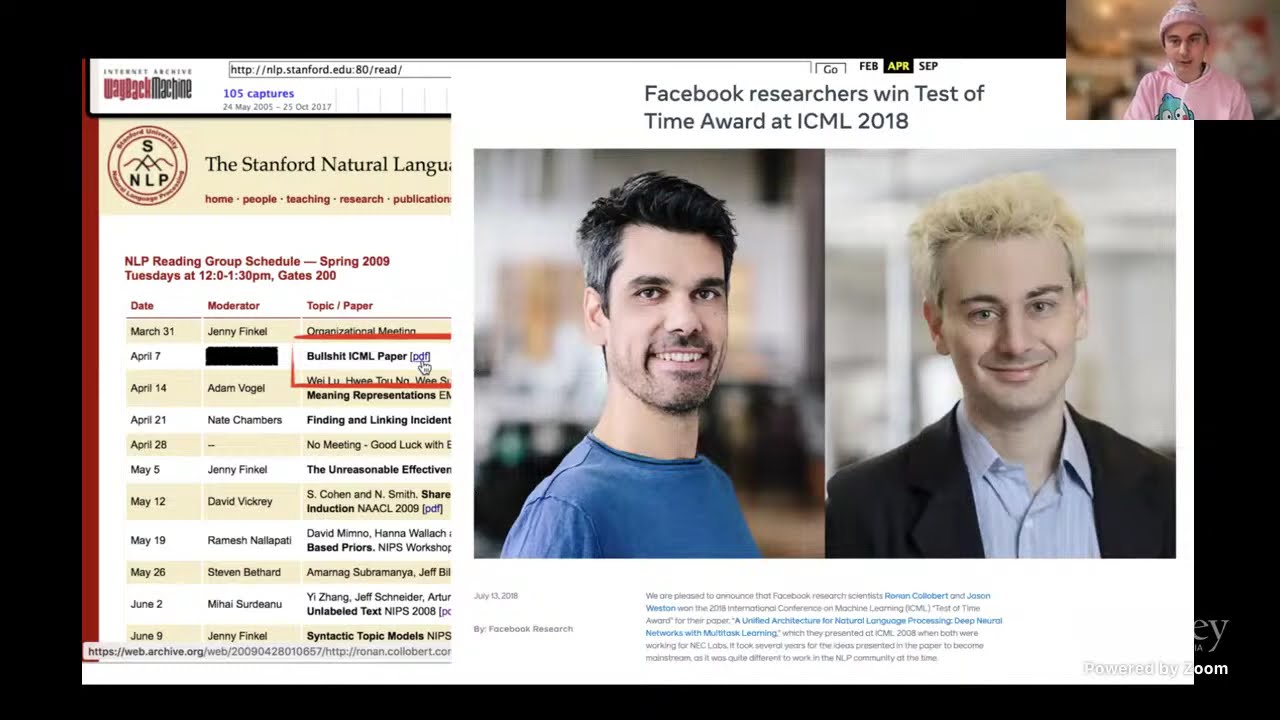

LLM agent foundations

A model can answer a prompt, but an agent must decide what to do next, which tools to use, and when to stop.

UC Berkeley CS294/194-280

Advanced LLM Agents MOOC Spring 2025

Safe and secure agentic AI

As agents gain tools and memory, security is no longer a prompt add-on; it is architecture.

Abstraction and discovery

Agents should not only solve one task; they should discover reusable abstractions that compress future tasks.

Informal plus formal math reasoning

A complete proof often needs informal planning before formal verification.

Autoformalization and theorem proving

Human math is informal; proof assistants require exact formal statements and tactics.

AlphaProof and formal math

Natural-language math reasoning is fragile; formal systems can verify proofs but are hard to search.

Perception to action

Computer-use agents must operate across real operating systems, not only benchmark websites.

Multimodal autonomous agents

Web and GUI tasks require seeing layout, reading text, choosing actions, and recovering from UI changes.

Code agents and vulnerability detection

Security bugs hide across files, execution paths, and tool outputs; static prompting misses them.

Open training recipes

Open models need reproducible paths to reasoning without secret proprietary data.

Memory and planning

Agents forget, repeat work, or plan against a false model of the environment.

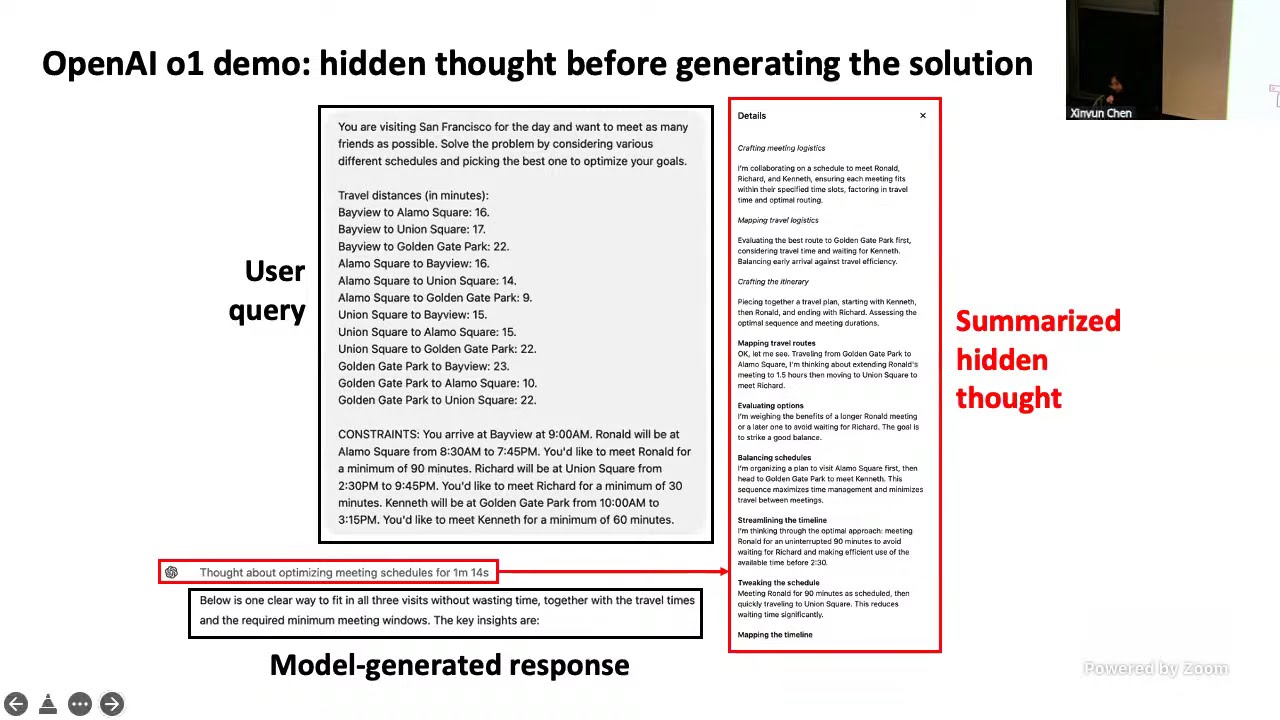

Learning to reason with LLMs

Reasoning behavior must be trained or elicited without simply teaching the model to produce longer text.

Inference-time reasoning

Some tasks need search at inference time because one sampled chain is fragile.